I have gotten quite a bit of progress done with my project this summer. Before I get into that, a short refresher on my project:

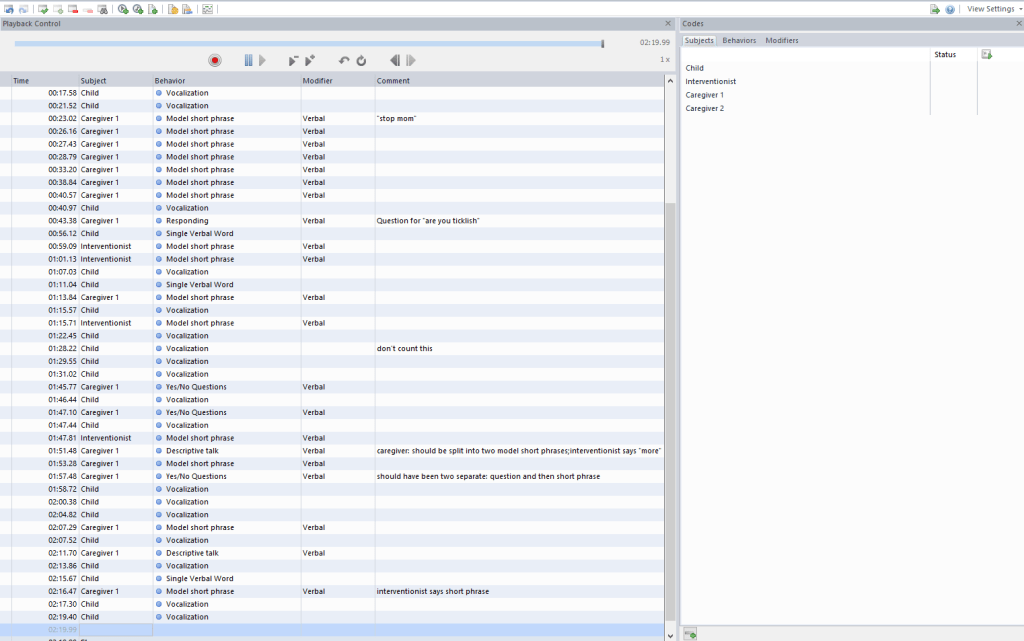

Three interventionists held intervention sessions in the homes of families with a D/deaf or Hard-of-Hearing (DHH) child as part of a larger study conducted previously. During these sessions, they would work on teaching and implementing strategies to encourage communication development in the child. I am coding these communication strategies of the adults and communication acts of the children during two minute clips of each session to look at the relationships between the strategies and use of sign on a DHH child’s communication development.

Now back to the update:

The first step was to sort through the videos from the overarching study to obtain clips to use for practice. I watched intervention sessions of interventionists who would not be included in my study to look for moments of high interaction between the child and adult(s). I noted down sections that lasted 2 to 2.5 minutes long. My mentor professor and her graduate research assistant helped me recruit another coder for reliability, Keely.

Keely and I began practicing application of the codes in early May. We would individually go through one video, first coding (which is essentially placing a marker of what strategy or communication act was done and by who) the child’s communication acts, then the interventionist’s communication strategies, and then the caregivers’ communication strategies. Since I had come up with the coding scheme, it was a time for Keely to ask me any questions about how she should code a section or apply a code. When we had coded all parts of the triad in the video clip, we went through the video comparing what she and I had marked down. We would discuss what differences we had and how we felt about the definition and application of the strategies or acts.

The software we use, NOLDUS Observer, allows us to run a reliability analysis which basically looks at the coding of two videos and counts how many agreements and disagreements there were. It calculates a percentage of agreements to the total matchings to provide us with a reliability score. The target score for this project is 80%.

We started out with our scores in the 50s to 60s at first. This probably arose from the changes being made as we came across certain cases with the practice videos. Definitions were tweaked to be clearer or changed to fit what I wanted from it for the study. Non-examples and notes were added to make things more explicit and thorough. Since I got worried about finishing coding before the end of the summer to follow my project plan, we began to go through two videos in one session at a time.

I found that we would discuss the codes more and ask each other questions, but the reliability scores weren’t going up. After a bit, I realized that I was getting a little tired of coding after one video, especially meeting every day, so I found myself rushing through the videos. I also wouldn’t go back to check the codes overall because I got lazy and overconfident, if I’m being perfectly honest. When Keely and I would go through the videos, I would see acts or strategies I had clearly missed or would apply a code incorrectly. Considering this is my project, I figured that I would need to change things since I would have to be the one caring most about it.

Instead we began to do one video and then check the reliability score after. I decided that if the score was less than 70%, then we would do another one, but if we got 70% or over, there would be no need to do another one. Since I was getting burnt out and therefore careless, I let Keely know, and also for myself, that we can take it slow and should watch through the video one time with all the codes marked before we go through it together. The scores jumped tremendously, with us having been 70% or above for the past few videos. I’m really glad the slump didn’t get to us and that 80% seems much more achievable now.

I am planning for us to reach 80% reliability consistently by the end of June so then we can begin the next phase of rely. This would entail randomly selecting 30% of the sessions and having us independently code them. From this, I would obtain our interrater reliability to report. Once the randomly selected videos have been coded, Keely and I would continue working through the rest separately.